7 Break-down Plots for Interactions

In Chapter 6, we presented a model-agnostic approach to the calculation of the attribution of an explanatory variable to a model’s predictions. However, for some models, like models with interactions, the results of the method introduced in Chapter 6 depend on the ordering of the explanatory variables that are used in computations.

In this chapter, we present an algorithm that addresses the issue. In particular, the algorithm identifies interactions between pairs of variables and takes them into account when constructing break-down (BD) plots. In our presentation, we focus on pairwise interactions that involve pairs of explanatory variables, but the algorithm can be easily extended to interactions involving a larger number of variables.

7.1 Intuition

Interaction (deviation from additivity) means that the effect of an explanatory variable depends on the value(s) of other variable(s). To illustrate such a situation, we use the Titanic dataset (see Section 4.1). For the sake of simplicity, we consider only two variables, age and class. Age is a continuous variable, but we will use a dichotomized version of it, with two levels: boys (0-16 years old) and adults (17+ years old). Also, for class, we will consider just “2nd class” and “other”.

Table 7.1 shows percentages of survivors for boys and adult men travelling in the second class and other classes on Titanic. Overall, the proportion of survivors among males is 20.5%. However, among boys in the second class, the proportion is 91.7%. How do age and class contribute to this higher survival probability? Let us consider the following two explanations.

Explanation 1:

The overall probability of survival for males is 20.5%, but for the male passengers from the second class, the probability is even lower, i.e., 13.5%. Thus, the effect of the travel class is negative, as it decreases the probability of survival by 7 percentage points. Now, if, for male passengers of the second class, we consider their age, we see that the survival probability for boys increases by 78.2 percentage points, from 13.5% (for a male in the second class) to 91.7%. Thus, by considering first the effect of class, and then the effect of age, we can conclude the effect of \(-7\) percentage points for class and \(+78.2\) percentage points for age (being a boy).

Explanation 2:

The overall probability of survival for males is 20.5%, but for boys the probability is higher, i.e., 40.7%. Thus, the effect of age (being a boy) is positive, as it increases the survival probability by 20.2 percentage points. On the other hand, for boys, travelling in the second class increases the probability further from 40.7% overall to 91.7%. Thus, by considering first the effect of age, and then the effect of class, we can conclude the effect of \(+20.2\) percentage points for age (being a boy) and \(+51\) percentage points for class.

| Class | Boys (0-16) | Adults (>16) | Total |

|---|---|---|---|

| 2nd | 11/12 = 91.7% | 13/166 = 7.8% | 24/178 = 13.5% |

| other | 22/69 = 31.9% | 306/1469 = 20.8% | 328/1538 = 21.3% |

| Total | 33/81 = 40.7% | 319/1635 = 19.5% | 352/1716 = 20.5% |

Thus, by considering the effects of class and age in a different order, we get very different attributions (contributions attributed to the variables). This is because there is an interaction: the effect of class depends on age and vice versa. In particular, from Table 7.1 we could conclude that the overall effect of the second class is negative ( \(-7\) percentage points), as it decreases the probability of survival from 20.5% to 13.5%. On the other hand, the overall effect of being a boy is positive (\(+20.2\) percentage points), as it increases the probability of survival from 20.5% to 40.7%. Based on those effects, we would expect a probability of \(20.5\% - 7\% + 20.2\% = 33.7\%\) for a boy in the second class. However, the observed proportion of survivors is much higher, 91.7%. The difference \(91.7\% - 33.7\% = 58\%\) is the interaction effect. We can interpret it as an additional effect of the second class specific for boys, or as an additional effect of being a boy for the male passengers travelling in the second class.

The example illustrates that interactions complicate the evaluation of the importance of explanatory variables with respect to a model’s predictions. In the next section, we present an algorithm that allows including interactions in the BD plots.

7.2 Method

Identification of interactions in the model is performed in three steps (Gosiewska and Biecek 2019):

- For each explanatory variable, compute \(\Delta^{j|\emptyset}(\underline{x}_*)\) as in equation (6.8) in Section 6.3.2. The measure quantifies the additive contribution of each variable to the instance prediction.

- For each pair of explanatory variables, compute \(\Delta^{\{i,j\}|\emptyset}(\underline{x}_*)\) as in equation (6.8) in Section 6.3.2, and then the “net effect” of the interaction \[\begin{equation} \Delta^{\{i,j\}}_I(x_*) \equiv \Delta^{\{i,j\}|\emptyset}(\underline{x}_*)-\Delta^{i|\emptyset}(\underline{x}_*)-\Delta^{j|\emptyset}(\underline{x}_*). \tag{7.1} \end{equation}\] Note that \(\Delta^{\{i,j\}|\emptyset}(\underline{x}_*)\) quantifies the joint contribution of a pair of variables. Thus, \(\Delta^{\{i,j\}}_I(\underline{x}_*)\) measures the contribution related to the deviation from additivity, i.e., to the interaction between the \(i\)-th and \(j\)-th variable.

- Rank the so-obtained measures for individual explanatory variables and interactions to determine the final ordering for computing the variable-importance measures. Using the ordering, compute variable-importance measures \(v(j, \underline{x}_*)\), as defined in equation (6.9) in Section 6.3.2.

The time complexity of the first step is \(O(p)\), where \(p\) is the number of explanatory variables. For the second step, the complexity is \(O(p^2)\), while for the third step it is \(O(p)\). Thus, the time complexity of the entire procedure is \(O(p^2)\).

7.3 Example: Titanic data

Let us consider the random forest model titanic_rf (see Section 4.2.2) and passenger Johnny D (see Section 4.2.5) as the instance of interest in the Titanic data.

Table 7.2 presents single-variable contributions \(\Delta^{j|\emptyset}(\underline{x}_*)\), paired-variable contributions \(\Delta^{\{i,j\}|\emptyset}(\underline{x}_*)\), and interaction contributions \(\Delta_{I}^{\{i,j\}}(\underline{x}_*)\) for each explanatory variable and each pair of variables. All the measures are calculated for Johnny D, the instance of interest.

| Variable | \(\Delta^{\{i,j\}|\emptyset}(\underline{x}_*)\) | \(\Delta_{I}^{\{i,j\}}(\underline{x}_*)\) | \(\Delta^{i|\emptyset}(\underline{x}_*)\) |

|---|---|---|---|

| age | 0.270 | ||

| fare:class | 0.098 | -0.231 | |

| class | 0.185 | ||

| fare:age | 0.249 | -0.164 | |

| fare | 0.143 | ||

| gender | -0.125 | ||

| age:class | 0.355 | -0.100 | |

| age:gender | 0.215 | 0.070 | |

| fare:gender | |||

| embarked | -0.011 | ||

| embarked:age | 0.269 | 0.010 | |

| parch:gender | -0.136 | -0.008 | |

| sibsp | 0.008 | ||

| sibsp:age | 0.284 | 0.007 | |

| sibsp:class | 0.187 | -0.006 | |

| embarked:fare | 0.138 | 0.006 | |

| sibsp:gender | -0.123 | -0.005 | |

| fare:parch | 0.145 | 0.005 | |

| parch:sibsp | 0.001 | -0.004 | |

| parch | -0.003 | ||

| parch:age | 0.264 | -0.002 | |

| embarked:gender | -0.134 | 0.002 | |

| embarked:parch | -0.012 | 0.001 | |

| fare:sibsp | 0.152 | 0.001 | |

| embarked:class | 0.173 | -0.001 | |

| gender:class | 0.061 | 0.001 | |

| embarked:sibsp | -0.002 | 0.001 | |

| parch:class | 0.183 | 0.000 |

The table illustrates the calculation of the contributions of interactions. For instance, the additive contribution of age is equal to 0.270, while for fare it is equal to 0.143. The joint contribution of these two variables is equal to 0.249. Hence, the contribution attributed to the interaction is equal to \(0.249 - 0.270 - 0.143 = -0.164\).

Note that the rows of Table 7.2 are sorted according to the absolute value of the net contribution of the single explanatory variable or the net contribution of the interaction between two variables. For a single variable, the net contribution is simply measured by \(\Delta^{j|\emptyset}(\underline{x}_*)\), while for an interaction it is given by \(\Delta_{I}^{\{i,j\}}(\underline{x}_*)\). In this way, if two variables are important and there is little interaction, then the net contribution of the interaction is smaller than the contribution of any of the two variables. Consequently, the interaction will be ranked lower. This is the case, for example, of variables age and gender in Table 7.2. On the other hand, if the interaction is important, then its net contribution will be larger than the contribution of any of the two variables. This is the case, for example, of variables fare and class in Table 7.2.

Based on the ordering of the rows in Table 7.2, the following sequence of variables is identified as informative:

- age, because it has the largest (in absolute value) net contribution equal to 0.270;

- fare:class interaction, because its net contribution (-0.231) is the second largest (in absolute value);

- gender, because variables class and fare are already accounted for in the fare:class interaction and the net contribution of gender, equal to 0.125, is the largest (in absolute value) among the remaining variables and interactions;

- embarked harbor (based on a similar reasoning as for gender);

- then sibsp and parch as variables with the smallest net contributions (among single variables), which are larger than the contribution of their interaction.

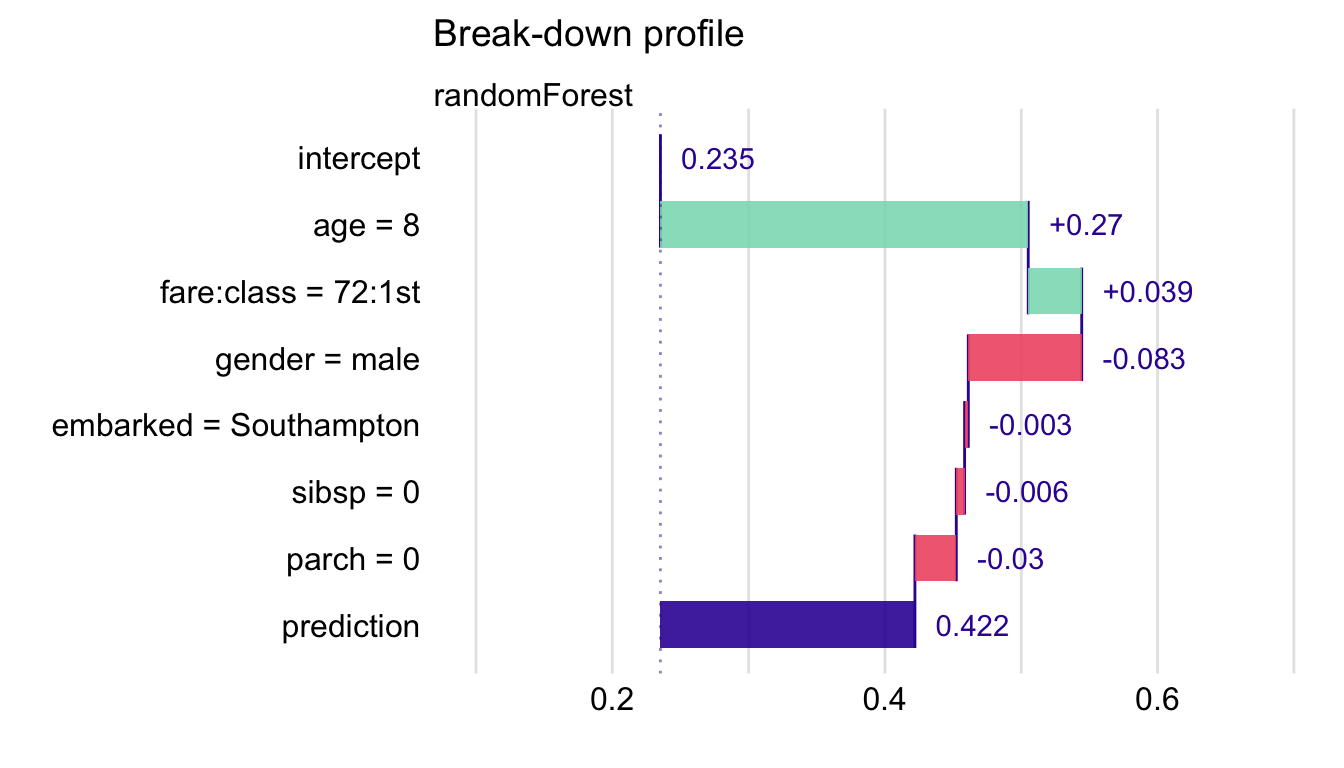

Table 7.3 presents the variable-importance measures computed by using the following ordering of explanatory variables and their pairwise interactions: age, fare:class, gender, embarked, sibsp, and parch. The table presents also the conditional expected values (see equations (6.5) and (6.9) in Section 6.3.2) \[E_{\underline{X}}\left\{f(\underline{X}) | \underline{X}^{\{1,\ldots,j\}} = \underline{x}^{\{1,\ldots,j\}}_*\right\}=v_0+\sum_{k=1}^j v(k,\underline{x}_*)=v_0+\Delta^{\{1,\ldots\,j\}|\emptyset}(\underline{x}_*).\] Note that the expected value presented in the last row, 0.422, corresponds to the model’s prediction for the instance of interest, passenger Johnny D.

| Variable | \(j\) | \(v(j,\underline{x}_*)\) | \(v_0+\sum_{k=1}^j v(k,\underline{x}_*)\) |

|---|---|---|---|

| intercept (\(v_0\)) | 0.235 | ||

| age = 8 | 1 | 0.269 | 0.505 |

| fare:class = 72:1st | 2 | 0.039 | 0.544 |

| gender = male | 3 | -0.083 | 0.461 |

| embarked = Southampton | 4 | -0.002 | 0.458 |

| sibsp = 0 | 5 | -0.006 | 0.452 |

| parch = 0 | 6 | -0.030 | 0.422 |

Figure 7.1 presents the interaction-break-down (iBD) plot corresponding to the results shown in Table 7.3. The interaction between fare and class variables is included in the plot as a single bar. As the effects of these two variables cannot be disentangled, the plot uses just that single bar to represent the contribution of both variables. Table 7.2 indicates that class alone would increase the mean prediction by 0.185, while fare would increase the mean prediction by 0.143. However, taken together, they increase the average prediction only by 0.098. A possible explanation of this negative interaction could be that, while the ticket fare of 72 is high on average, it is actually below the median when the first-class passengers are considered. Thus, if first-class passengers with “cheaper” tickets, as Johnny D, were, for instance, placed in cabins that made it more difficult to reach a lifeboat, this could lead to lower chances of survival as compared to other passengers from the same class (though the chances could be still higher as compared to passengers from other, lower travel classes).

Figure 7.1: Break-down plot with interactions for the random forest model and Johnny D for the Titanic data.

7.4 Pros and cons

iBD plots share many advantages and disadvantages of BD plots for models without interactions (see Section 6.5). However, in the case of models with interactions, iBD plots provide more correct explanations.

Though the numerical complexity of the iBD procedure is quadratic, it may be time-consuming in case of models with a large number of explanatory variables. For a model with \(p\) explanatory variables, we have got to calculate \(p*(p+1)/2\) net contributions for single variables and pairs of variables. For datasets with a small number of observations, the calculations of the net contributions will be subject to a larger variability and, therefore, larger randomness in the ranking of the contributions.

It is also worth noting that the presented procedure of identification of interactions is not based on any formal statistical-significance test. Thus, the procedure may lead to false-positive findings and, especially for small sample sizes, false-negative errors.

7.5 Code snippets for R

In this section, we use the DALEX package, which is a wrapper for iBreakDown R package. The package covers all methods presented in this chapter. It is available on CRAN and GitHub.

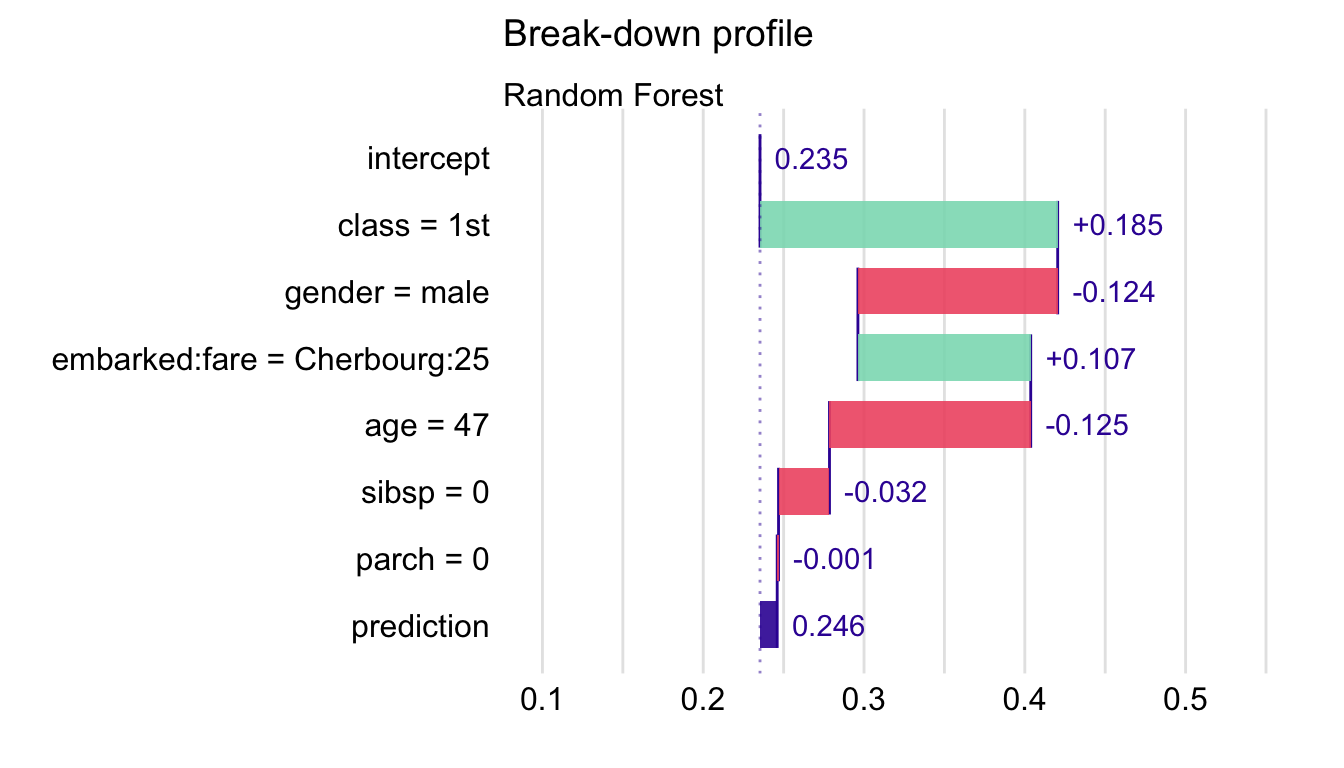

For illustration purposes, we use the titanic_rf random forest model for the Titanic data developed in Section 4.2.2. Recall that the model is constructed to predict the probability of survival for passengers of Titanic. Instance-level explanations are calculated for Henry, a 47-year-old passenger that travelled in the first class (see Section 4.2.5).

First, we retrieve the titanic_rf model-object and the data for Henry via the archivist hooks, as listed in Section 4.2.7. We also retrieve the version of the titanic data with imputed missing values.

titanic_imputed <- archivist::aread("pbiecek/models/27e5c")

titanic_rf <- archivist:: aread("pbiecek/models/4e0fc")

(henry <- archivist::aread("pbiecek/models/a6538")) class gender age sibsp parch fare embarked

1 1st male 47 0 0 25 CherbourgThen we construct the explainer for the model by using the function explain() from the DALEX package (see Section 4.2.6). Note that, beforehand, we have got to load the randomForest package, as the model was fitted by using function randomForest() from this package (see Section 4.2.2) and it is important to have the corresponding predict() function available.

library("DALEX")

library("randomForest")

explain_rf <- DALEX::explain(model = titanic_rf,

data = titanic_imputed[, -9],

y = titanic_imputed$survived == "yes",

label = "Random Forest")The key function to construct iBD plots is the DALEX::predict_parts() function. The use of the function has already been explained in Section 6.6. In order to perform calculations that allow obtaining iBD plots, the required argument is type = "break_down_interactions".

bd_rf <- predict_parts(explainer = explain_rf,

new_observation = henry,

type = "break_down_interactions")

bd_rf## contribution

## Random Forest: intercept 0.235

## Random Forest: class = 1st 0.185

## Random Forest: gender = male -0.124

## Random Forest: embarked:fare = Cherbourg:25 0.107

## Random Forest: age = 47 -0.125

## Random Forest: sibsp = 0 -0.032

## Random Forest: parch = 0 -0.001

## Random Forest: prediction 0.246We can compare the obtained variable-importance measures to those reported for Johnny D in Table 7.3. For Henry, the most important positive contribution comes from class, while for Johnny D it is age. Interestingly, for Henry, a positive contribution of the interaction between embarked harbour and fare is found. For Johnny D, a different interaction was identified: for fare and class. Finding an explanation for this difference is not straightforward. In any case, in those two instances, the contribution of fare appears to be modified by effects of other variable(s), i.e., its effect is not purely additive.

By applying the generic plot() function to the object created by the DALEX::predict_parts() function we obtain the iBD plot.

Figure 7.2: Break-down plot with interactions for the random forest model and Henry for the Titanic data, obtained by applying the generic plot() function in R.

The resulting iBD plot for Henry is shown in Figure 7.2. It can be compared to the iBD plot for Johnny D that is presented in Figure 7.1.

7.6 Code snippets for Python

In this section, we use the dalex library for Python. The package covers all methods presented in this chapter. It is available on pip and GitHub.

For illustration purposes, we use the titanic_rf random forest model for the Titanic data developed in Section 4.3.2. Instance-level explanations are calculated for Henry, a 47-year-old passenger that travelled in the first class (see Section 4.3.5).

In the first step, we create an explainer-object that provides a uniform interface for the predictive model. We use the Explainer() constructor for this purpose (see Section 4.3.6).

import pandas as pd

henry = pd.DataFrame({'gender': ['male'], 'age': [47],

'class': ['1st'],

'embarked': ['Cherbourg'], 'fare': [25],

'sibsp': [0], 'parch': [0]},

index = ['Henry'])

import dalex as dx

titanic_rf_exp = dx.Explainer(titanic_rf, X, y,

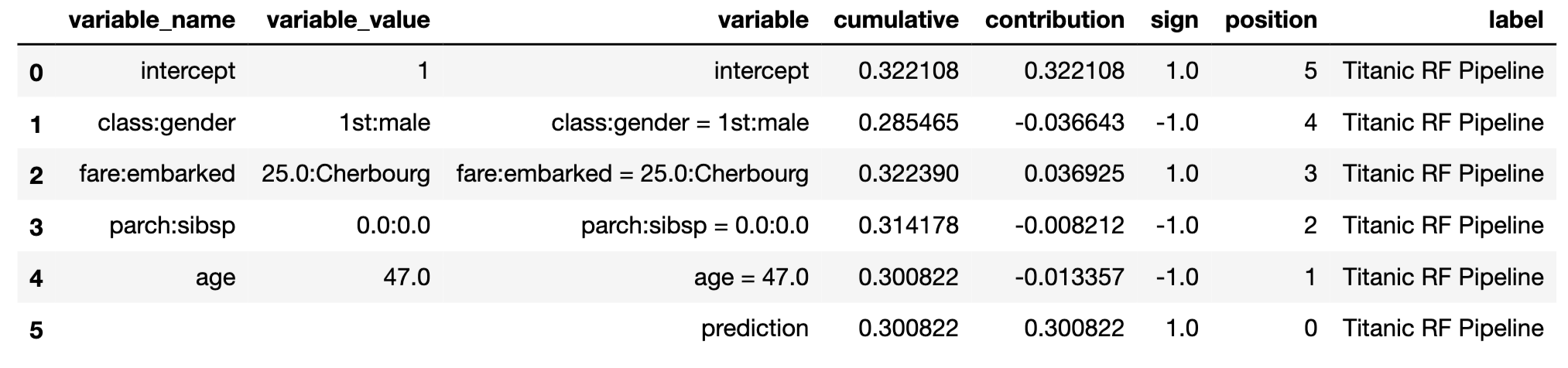

label = "Titanic RF Pipeline")To calculate the attributions with the break-down method with interactions, we use the predict_parts() method with type='break_down_interactions' argument (see Section 6.7). The first argument indicates the data for the observation for which the attributions are to be calculated.

Interactions are often weak and their net effects are not larger than the contributions of individual variables. If we would like to increase our preference for interactions, we can use the interaction_preference argument. The default value of \(1\) means no preference, while larger values indicate a larger preference. Results are stored in the result field.

bd_henry = titanic_rf_exp.predict_parts(henry,

type = 'break_down_interactions',

interaction_preference = 10)

bd_henry.result

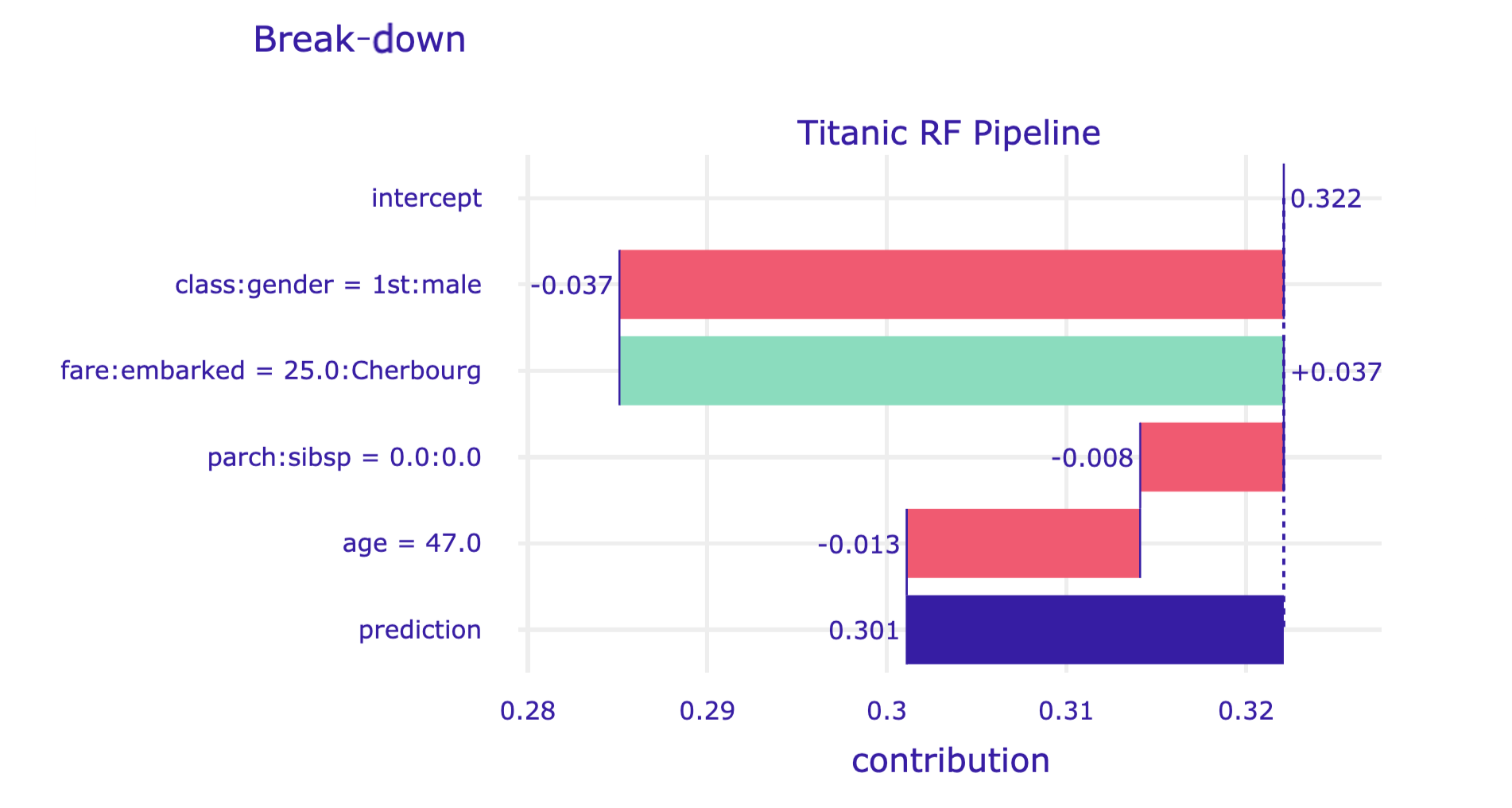

By applying the plot() method to the resulting object, we construct the corresponding iBD plot.

The resulting plot for Henry is shown in Figure 7.3.

Figure 7.3: Break-down plot with interactions for the random forest model and Henry for the Titanic data, obtained by applying the plot() method in Python.

References

Gosiewska, Alicja, and Przemyslaw Biecek. 2019. iBreakDown: Uncertainty of Model Explanations for Non-additive Predictive Models. https://arxiv.org/abs/1903.11420v1.